“Easy to deploy” is one of the most overused phrases in industrial automation. Every vendor says their system is plug-and-play. Every demo runs flawlessly on a clean booth floor. So when we set out to write about Physical AI’s ease of use, we wanted to skip the marketing gloss and get a candid read from someone who deploys these systems for a living.

Jimmy Li is a Physical AI expert at Vention. He works on the perception, planning, and manipulation pipeline behind Vention’s Rapid Operator AI, the system that brings unstructured pick-and-place to mid-market and enterprise manufacturers. He advises manufacturing teams on whether their use cases are a good fit for Physical AI and what it would take to go from CAD to a functioning robot cell.

![]()

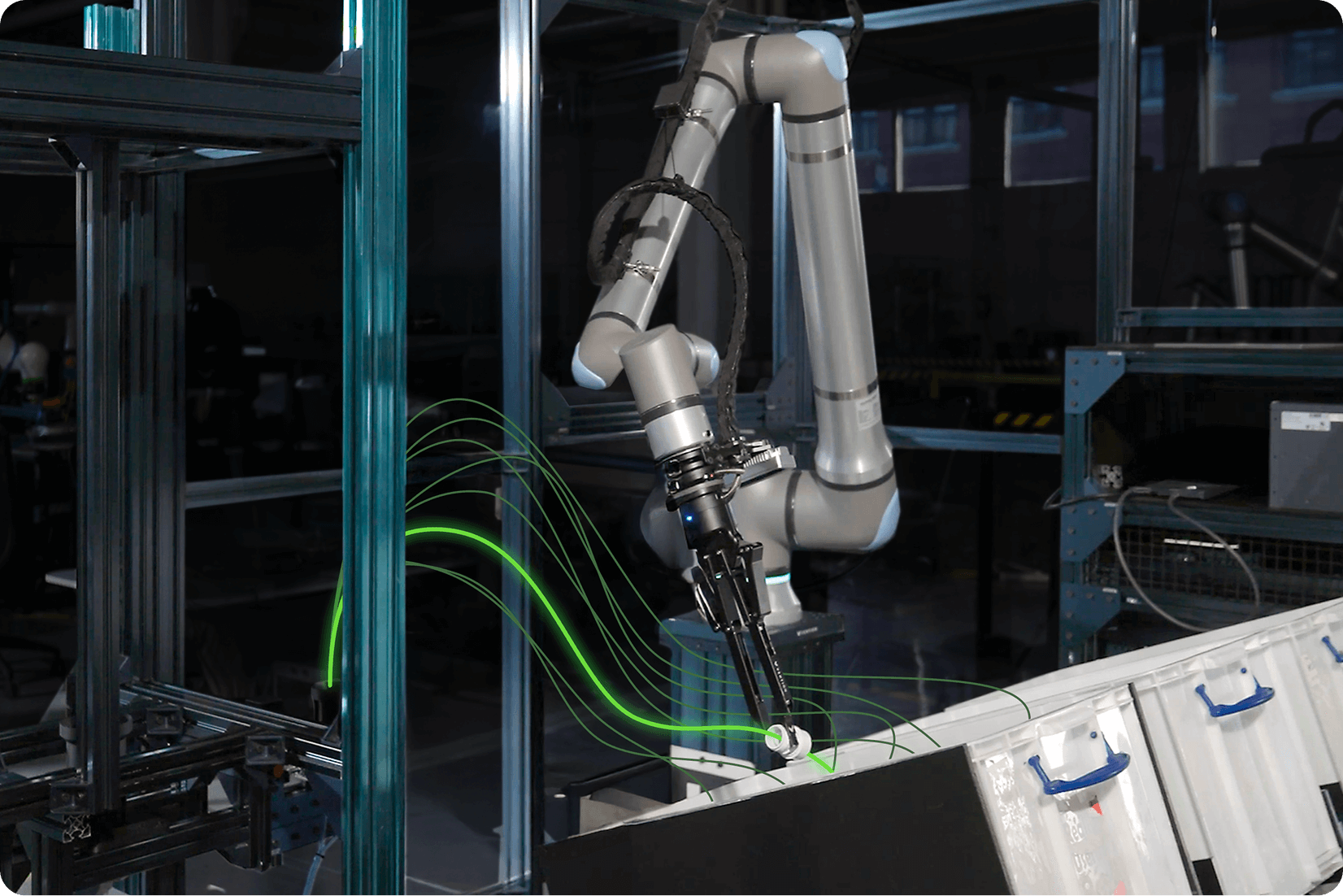

Vention’s Jimmy Li and Gulliaume Boulianne working on Rapid Operator AI (in the background).

We sat down with Jimmy to walk through four things: whether Physical AI is genuinely easier to deploy than it used to be, what daily use actually looks like, how to know if a solution will scale, and what’s coming next that operations and engineering leaders should have on their radar.

Is Physical AI actually easy to deploy?

Compared with five or ten years ago, has deployment really gotten easier?

It’s now meaningfully easier than 15 years ago, and a lot of that comes down to the maturation of foundation models. We can now use zero-shot models that are trained on huge amounts of data and generalize to new tasks almost immediately. NVIDIA FoundationStereo can estimate depth from any pair of stereo RGB images. Meta’s Segment Anything can isolate the regions of an image that contain the object you want to pick, no training required. FoundationPose can estimate the pose of an object from a CAD model alone. None of these need task-specific training.

These models are also much more robust to environmental conditions than what came before. Lighting used to be a major issue. It isn’t anymore.

We can run these models with the lights on, then turn them off so it’s very dark, and they still work. We can get something working in the lab and have the same code run on a trade-show floor with no changes.

Ten or fifteen years ago, you trained a new model for every new task. That bottleneck is largely gone. Onboarding a new task is dramatically faster.

So what’s still hard?

Hardware design, mostly. Gripper finger design matters a lot, and we still tend to design new fingers for every new part. Regrip stations, the fixtures the robot uses to put a part down and grab it again in a different orientation, are another bottleneck. Designing those is slow, iterative, and not very scalable. The software side has gotten dramatically easier; the mechanical side still has work to do.

What does daily use actually look like?

What does a team need to prepare before a robot is up and running?

A quick caveat, what follows is the process we use at Vention. Other vendors will set things up differently, so treat this as one concrete example rather than a universal recipe.

There’s a preparation phase before any operations can happen. At a high level: a CAD model of the part, prepared with the right scaling. A set of candidate grasps defined relative to that CAD model. A collision model of the environment, we use a wrist-mounted camera to scan the workspace, generate a point cloud, then fit collision bodies to it. And a collision model of the robot itself, including whichever gripper and fingers are being used.

For pick-and-place, the picking phase is largely handled by general-purpose software: it uses the CAD model to detect the object, and picks a non-colliding grasp from the candidate set. The placement sequence typically requires some custom code, because that’s the part that varies most between applications.

Where do teams hit friction early on?

The biggest one is alignment on what’s achievable. When we start a conversation with a prospective client, it’s often not clear what’s doable and what isn’t. For pick-and-place, cycle time is a common gap, there are a lot of situations where something can be done, but not as fast as the line needs.

Beyond cycle time, the rule of thumb is simple:

The more structure there is, the easier the problem becomes.

A messy bin is hard. A shallow tray is easier. Parts in slots or on a rack are easier still. The reason isn’t just the success rate, it’s cycle time. Messy bins lead to missed picks, and every retry hits your throughput.

It also helps when clients are open to small process changes. We recently worked with a customer who needed to insert a part deep inside an enclosure. Moving the receptacle outside the enclosure gave the robot the room it needed. The robot arm isn’t as flexible as a human arm, small mechanical tweaks can transform what’s feasible.

And one honest thing about unstructured pick-and-place: there’s a long tail of edge cases. Sometimes the robot twists into a pose it can’t recover from. Sometimes a cable tangles. We try to catch all of these, but it’s hard to catch every one. If a customer is open to five minutes of manual intervention every couple of hours, deployment becomes a lot more practical.

How do you know if it will scale?

What should technical champions look for when evaluating fit?

A few practical criteria. First, cycle time: a 15–20 second cycle is a good starting point for a single-arm system. Two-arm systems are possible but the hardware cost makes ROI harder to justify.

Second, the parts. Rigid, solid objects are much easier to automate than flexible or soft ones. Uniform parts, a bin of one SKU rather than 30 mixed SKUs, are a stronger candidate. And being open to small mechanical adjustments around the cell goes a long way: removing obstructions, allowing the bin to be shaken to reorient parts, that kind of thing.

How do you evaluate whether a solution will scale across sites and use cases?

A zero-shot pipeline is a good lever to evaluate scalability. If you don’t have to train a new model for every use case, you can scale across applications and sites without a custom data-collection effort each time. Because foundation models are robust to environmental conditions like lighting and dust, scaling across physical environments is relatively straightforward.

What’s coming next?

What’s on the near horizon that operations and engineering teams should be tracking?

Sensor-to-action models. The pipeline we described earlier is modular: depth estimation, segmentation, pose estimation, grasp selection, motion planning, each step is its own component. Sensor-to-action models collapse that pipeline. Sensor data goes in, and low-level robot commands come out.

The advantage is dexterity. Behaviors that are very hard to program with classical methods become learnable. If a part is jammed in the corner of a bin, the robot can learn to push it toward the middle and then grab it. It can learn to brace a part against the wall of the bin to get a better grip. These are the kinds of moves that, today, we’d try to engineer around with mechanical fixtures.

There are two ways to train these models. The more popular path right now is teleoperation: a human demonstrates the task, sometimes for hundreds of hours, and the model learns from those demonstrations. The other is self-exploration, the robot tries actions in simulation or the real world and discovers what works. Both still require training, so neither is zero-shot today. The interesting frontier is methods that learn from a small amount of demonstration data, five or six hours rather than hundreds.

It’s still an open question whether the right answer is fully end-to-end learning or a hybrid where classical methods handle parts of the pipeline and learned policies handle the dextrous moments. We’re actively exploring it.

We don’t want to be designing new fingers all the time. We don’t want to be designing regrip stations all the time. If we can compensate by using more intelligent planning that comes from learning-based methods, deploying robots gets a lot cheaper and more scalable.

That’s the bet, that the next leap in ease of use isn’t going to come from a better fixture, it’s going to come from a smarter robot.

What This Means for Manufacturing Teams

The “easy to deploy” claim has gotten more credible in the last few years. The software is robust and general enough to handle many pick-and-place applications, but friction still remains in the physical setup, in cycle-time math, and in being honest about where a use case falls on the structured-to-unstructured spectrum.

The questions that actually predict success aren’t “Is the AI good enough?”, but “Is my cycle time achievable with one arm?”, “Are my parts rigid and uniform?”, “Am I willing to make a small process tweak to give the robot room to work?”, and “Am I evaluating a pipeline that can be redeployed at the next site without retraining?”

If the answers point in the right direction, a Physical AI-based solution may already be within reach. Either way, the next wave of learning-based dexterity won’t just change what’s possible. It will change what counts as ready.

***

Want to see how Vention’s Rapid Operator AI handles your use case?

Talk to our team about your cycle time, and what a deployment timeline could look like.